Whose 'Intelligence' Is It Anyway?

Looking at AI, cognition and the human brain to conceptualize 'Intelligence' in Artificial Intelligence.

With the amount of news articles and research papers that we tend to see these days on the wonderful benefits that Artificial Intelligence (AI) and Machine Learning (ML) may possess for humanity as a whole, it would not be entirely implausible to call the times we live in, the ‘Age of AI’. There have, of course, been discussions of the possibility of the creation of an Artificial General Intelligence (AGI), a state of advancement over our current levels of available Artificial Intelligence – which would “indicate the same scope of intelligence as we see in human action: that in any real situation behavior appropriate to the ends of the system and adaptive to the demands of the environment can occur, within some limits of speed and complexity”.

Before I embark on this, I must admit that I am woefully lacking in knowledge and understanding of the complexities that such aspects involve when compared to the stalwarts in such fields. If you believe that I have misrepresented a fact or erred in my understanding anywhere, please do correct me!

I. Conceptualizing Intelligence

The idea of machines that can perform tasks that require ‘intelligence’ goes at least back to Descartes and Leibniz1. However, what is this idea of ‘intelligence’? More specifically, what are the various ways in which we conceive the modern interpretation of ‘intelligence’ in light of AI? The debate on the essence of intelligence itself is nothing new - it is something that has been going on for decades, but there still seems to be little signs of consensus.

Logically, AI researchers would need to undertake a two-step process that must serve as a crude roadmap to creating AI: firstly, to choose a working definition of intelligence, and secondly then to produce it in a computer. Therefore, in this exercise of ours, of trying to define ‘intelligence’, it would perhaps be important to ensure that we can have ‘operative definitions’ of the same. It would allow us to think clearly of the acceptable assumptions and desired results, which would in turn bind all the concrete work that follows. An improper definition may end up making such research more difficult than it would otherwise be, and a definitional defect would, to my mind, not be able to be compensated by the research or the output of such an endeavour.

In conventional terms, the definition for intelligence would perhaps be something akin to ‘an ability to solve hard problems’. Another conventional understanding may also be preferred by some, wherein intelligence can be defined as a general mental ability for reasoning, problem solving, and learning. Because of its general nature, intelligence is the successful integration of cognitive functions such as perception, attention, memory, language, or planning.

But current capabilities of AI often run into a multitude of problems when presented with multivariate situations of unbounded nature that possess the kind of complexity that one would expect a human to deal with:

What if a new processor is required when all existent processors are occupied? How do you structure and manage resources amongst the tasks? Can you even register such a task independently without human intervention?;

What if extra memory is required when all available memory is already full? Does the next process get automatically turned down?;

a task comes up with a time requirement, so that exhaustive search is not an

option? What about questions where time is an intrinsic element of the efficacy of the answer?;

What if new knowledge that the AI / AGI gains conflicts with previous knowledge? How do you reorient your (mechanical bearings?) beliefs and pick axioms that dictate which one holds true when?;

What if a question is presented for which no sure answer can be deduced from

available knowledge? How does one reason to a plausible solution then instead of drawing up a blank slate?

II. Human-like Intelligence for AIs?

It has long been the desire of mankind to create (and media to speculate on the after-effects of such creation) artificial intelligence that can both recognize how the human brain works and how to best replicate such process through computers and algorithms. The achievement of similarity between artificial intelligence (or more accurately artificial general intelligence) and human intelligence is therefore something that we all aspire to move towards. But one must ask, in what sense would these concepts be “similar”? To different people, the desired similarity may involve structure, behavior, capacity, function, or principle. Where would such a thing lead us?

III. AI as the ‘replicate’ of a human brain

The human brain itself has been undergoing serious investigations by many researchers in the field of neuroscience. In time past, there have been considerable investigations of the structure of the brain (its anatomy), but studies on the functional operation of its complex neural network both evaded, and yet paraded all sorts of fantasies as knowledge for many centuries. Around the middle of the 18th century, a functional understanding of the human brain began to take shape. During this period, studies revealed that impulse of nerve previously considered as “animal spirits” are simply electric signals similar to a charge in an electrical circuit2. Moreover, improvements in neuroscience research and microscopy have showed the structure and form of neurons, representing the brain as a network of neurons that communicate using chemical signals. For all intents and purposes, intelligence is produced by the human brain, so the logical consequence of any such attempt to conceive intelligence in the context of AI would be: That AI should attempt to simulate a brain in a computer system as faithfully as possible.

Such an opinion is put in its extreme form by neuroscientists Reeke and Edelman, who argue that “the ultimate goals of AI and neuroscience are quite similar”3. Though it sounds reasonable to identify AI with brain model, perhaps only a few AI researchers take such an approach in a very strict sense. Even the “neural network” movement is “not focused on neural modeling (i.e., the modeling of neurons), but rather focused on neurally inspired modeling of cognitive processes”4. Why? One obvious reason is the daunting complexity of this approach. Current technology is still not powerful enough to simulate a huge neural network, not to mention the fact that there are still many mysteries about the brain that abound common discourse. Our heads and our research still fail to grasp why or what our brains do, not entirely. And definitely not with enough precision to make it sensible for us to create an algorithmic architecture that mirrors it.

The adult brain of a human consists of about 100 billion neurons, each with about 1,000 – 10,000 connections, making a total 10^14 -10^15 connections in the brain. It is perhaps the most complex system, more complex than the entire mobile network of the world, or the amalgamations of all GPUs put together with its vast number of neurons making and unmaking connections at a timescale that can be as short as a few tens of seconds.

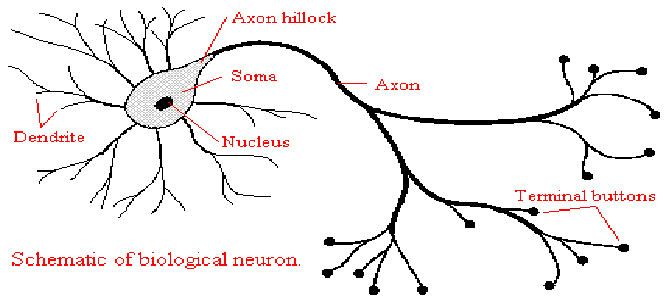

Sidebar 1: Neurons

Neurons are found in ganglia of the brain and in the spinal cord. They receive input from other neurons and are specialized cells capable of reacting to stimuli and conducting nerve impulses (Snell et al., 2010). Neurons differ in shape and size, and each neuron has a cell body consisting of processes called neurites (Snell et al., 2010). The neurites also known as dendrites, are responsible for receiving and conducting information towards the cell body (Snell et al., 2010). The term nerve fiber is used to refer to the dendrites and axons which conduct impulses away from the cell body. The neurons have branched projections called dendrites which helps to direct electrochemical stimulation received from neighbouring neural cells via synapses located at a number of points around the dendritic arbor to the cell body of the neuron from which the dendrites project.

Let alone the neurons in brain, we have thus far been unable – at the time of this writing – to create a computational or artificial model of a single paramecium. Moreover, even if we were able to build a brain model at the neuron level to any desired accuracy, it could not be called a success of AI, though it would be a success of neuroscience.

In that sense, AI may be more closely related to the concept of having a “model of mind” – that is, a high-level description of brain activity in which biological concepts do not appear5. A high-level description I believe would be preferred, not because a low-level description is impossible, but because it is usually simpler and more general. A distinctive characteristic of AI is the attempt to “get a mind without a brain” – that is, to describe mind in a medium-independent way. This is true for all models: in building a model, we concentrate on certain properties of an object or process and ignore irrelevant aspects; in so doing, we gain insights that are hard to discern in the object or process itself.

For this reason, an accurate duplication is not a model, and a model including unnecessary details is not a good model. If we agree that “brain” and “mind” are different concepts, then a good model of brain is not a good model of mind, though the former is useful for its own sake, and helpful for the building of the latter.

IV. AI as the ‘recreation’ of Human Behaviour

Given that we always judge the intelligence of other people by their behavior, it is natural to adopt an approach to AI that focuses on behaviours and expression as a signifier of intelligence. In such an instance, the aim of such an AI would be to accurately and precisely “reproduce the behavior produced by the human mind”. Such a working definition of intelligence asks researchers to use the Turing Test6 as a sufficient and necessary condition for having intelligence, and to take psychological evidence seriously.

Due to the nature of the Turing Test and the resource limitations of a concrete computer system, it is out of question for the system to have prestored in its memory all possible questions and proper answers in advance, and then to give a convincing imitation of a human being by searching such a list. The only realistic way to imitate human performance in a conversation is to produce the answers in real time. To do this, it needs not only cognitive faculties, but also much prior “human experience”7. Therefore, it must have a body that feels human, it must have all human motivations (including biological ones), and it must be treated by people as a human being – so in a sense it must simply be an “artificial human”, rather than a computer system with artificial intelligence.

As John French points out, by using behavior as evidence, the Turing Test is a criterion solely for human intelligence, not for intelligence in general. Such an approach can lead to good psychological models, which are valuable for many reasons, but it suffers from “human chauvinism” – we would have to say, according to the definition, that the science-fiction alien creature E.T. was not intelligent, because it would definitely fail the Turing Test. But by all measures of the concept of intelligence, E.T. was as intellectually sound as any other human of a similar standing would have.

Though “reproducing human (verbal) behavior” may still be a sufficient condition for being intelligent (as suggested by Turing), such a goal is difficult, if not impossible, to achieve. More importantly, it is not a necessary condition for being intelligent, if we want “intelligence” to be a more general concept than merely “human intelligence”.

V. AI as the ‘solver of hard problems’

As I mentioned previously, one of the definitions that we accorded to intelligence (and which would therefore be desirous of an AGI) would be the ability to solve hard problems. According to this type of formulation of intelligence, it is solely the capacity to solve hard problems that matters, and not how exactly the problems are solved.

What problems are “hard”? In the early days of AI, many researchers worked on intellectual activities like game playing and theorem proving. Nowadays, expert-system builders aim at “real-world problems” that crop up in various domains. The presumption behind this approach is: “Obviously, experts are intelligent, so if a computer system can solve problems that only experts can solve, the computer system must be intelligent, too”. This is why many people take the success of the chess-playing computer Deep Blue as the success of AI.

This movement has drawn in many researchers, produced many practically useful systems, attracted significant funding, and thus has made important contributions to the development of the AI enterprise. Usually, the systems are developed by analyzing domain knowledge and expert strategy, then building them into a computer system. However, though often profitable, these systems do not provide much insight into how the mind works. No wonder people ask, after learning how such a system works, “Where’s the AI?” – these systems look just like ordinary computer application systems, and still suffer from great rigidity and brittleness (something that general AI wants to avoid). If intelligence is defined as “the capacity to solve hard problems”, then the next question is: “Hard for whom?” If we say “hard for human beings”, then most existing computer software is already intelligent – no human can manage a database as well as a database management system, or substitute a word in a file as fast as an editing program. If we say “hard for computers,” then AI becomes “whatever hasn’t been done yet,” which has been dubbed “Tesler’s Theorem” (see sidebar)8. The view that AI is a “perpetually extending frontier” makes it attractive and exciting, which it deserves, but tells us little about how it differs from other research areas in computer science – is it fair to say that the problems there are easy? If AI researchers cannot identify other commonalities of the problems they attack besides mere difficulty, they will be unlikely to make any progress in understanding and replicating intelligence.

Sidebar 2: Tesler's Theorem and the AI Effect Named after Larry Tesler, the original adage (in his very own words) went something like “Intelligence is whatever machines haven't done yet”. Tesler’s Theorem would then go on to be restated by Douglas Hofstadter in his seminal work of 'Gödel, Escher, Bach' in the following way: “Artificial intelligence is whatever hasn’t been done yet.” This theorem points out the problem with defining the term ‘AI’ because it shows that what counts as AI is always changing. It renders AI an eternal goal. As such, future artificial intelligence will likely cover unreached machine uses that we haven’t thought of yet. Meanwhile, that which we view as AI functionality today will likely have found different, more suitable labels. This idea does find its validation in recent history, as a lot of cutting edge AI has been filtered, assimilated and integrated into other area or areas of general applications, leading to them having shed the tag of ‘AI’ with time once the said technology becomes commonplace enough. As a result, technology that might have otherwise held the AI label instead end up hiding behind other fronts and tags. (For instance, ‘expert systems’ rather than ‘AI’). Its constant redefinition is its own worst enemy then. For instance, at one point, a robot that would vaccum your house without you lifting a finger would have been considered an example of AI. Nowadays, hardly anyone is impressed by a RoboVacuum. It used to be that a computer that could beat a human grandmaster at chess would have sufficed as AI. Today, we consider that to be little more than a clever computer algorithm. Think of it in terms of Arthur C Clarke’s famous quote that “Sufficiently advanced technology is indistinguishable from magic”. Once this technology fails to be sufficiently advanced, or once you understand the underpinning framework that drives it well enough, it seems to lose its magic. The natural corollary of this argument would then be that AI will always be 10+ years away if we keep redefining it to exclude any successes we achieve – moving the goalpost, so to speak. Would that mean that the pursuit of an AGI, regardless of how advanced it may get, may be an illusory, unattainable goal that will always be hamstrung by Tesler’s theorem and the AI effect?

VI. AI as the ‘executor of cognitive functions’

To draw your perception back to the introductory paragraph that we began this ‘ideation’ with, one of the ways in which we described and kind of broke down intelligence was by characterizing it by a set of cognitive functions, such as reasoning, perception, memory, problem solving, language use, and so on. Subscribing to this view can allow us to concentrate on just one of these functions, relying on the hope that research on all the functions will eventually be able to be combined, in the future, to yield a complete picture of intelligence. A “cognitive function” is often defined in a general and abstract manner. This approach has produced, and will continue to produce, tools in the form of software packages and even specialized hardware, each of which can carry out a function that is similar to certain mental skills of human beings, and therefore can be used in various domains for practical purposes.

However, this kind of success does not justify claiming that it is the proper way to understand or even build AI. To define intelligence as a “toolbox of functions” has serious weaknesses. When specified in isolation, an implemented function is often quite different from its “natural form” in the human mind. For example, to study analogy without perception would always lead to distorted cognitive models9. Can we really understand and build concepts such as reasoning and memory without first understanding and subsequently integrating perception into a model? Even if we can produce the desired tools, this does not mean that we can easily combine them, because different tools may be developed under different assumptions, which prevents the tools from being combined. The basic problem with the “toolbox” approach is: without a “big picture” in mind, the study of a cognitive function in an isolated, abstracted, and often distorted form simply does not contribute to our understanding of intelligence.

A common counterargument runs something like this: “Intelligence is very complex, so we have to start from a single function to make the study tractable.”10 For many systems with weak internal connections, this is often a good choice, but for a system like the mind, whose complexity comes directly from its tangled internal interactions, the situation may be just the opposite. When the so-called “functions” are actually phenomena produced by a complex-but-unified mechanism, reproducing all of them together (by duplicating the mechanism) is simpler than reproducing only one of them.

It is for this reason that I believe that the conceptualization of ‘intelligence’ with regards to an AI requires any and all players involved to view such a process from a ‘systems thinking’ or a ‘wide-lens’ perspective and to be able to observe, and make sense of, the various constituent parts. More important is to understand how these various parts interact with each other and form something that may truly be considered ‘greater than the sum of its parts’. (I swear, this line is not from a corporate presentation).

VII. AI as the ‘creator of new principles and axioms’

According to this formulation of intelligence, what would distinguish intelligent systems and unintelligent systems would be their postulations, applicable environments, and basic principles of information processing. As a system adapting to its environment with insufficient knowledge and resources, an intelligent system should have many cognitive functions, but they are better thought of as emergent phenomena than as well-defined tools used by the system.

By learning from its experience, the system potentially can acquire the capacity to solve hard problems – actually, hard problems are those for which a solver (human or computer) has insufficient knowledge and resources – but it has no such built-in capacity, and thus, without proper training, no capacity is guaranteed, and acquired capacities can even be lost.

Because the human mind also follows the above principles, we would hope that such a system would behave similarly to human beings, but the similarity would exist at a more abstract level than that of concrete behaviors. Due to the fundamental difference between human experience/hardware and computer experience/hardware, the system is not expected to accurately reproduce masses of psychological data or to pass a Turing Test. Finally, although the internal structure of the system has some properties in common with a description of the human mind at the sub-symbolic level, it is not an attempt to simulate a biological neural network brick by brick (or neuron-by-neuron).

In summary, the structure approach contributes to neuroscience by building brain models, the behavior approach contributes to psychology by providing explanations of human behavior, the capacity approach contributes to application domains by solving practical problems, and the function approach contributes to computer science by producing new software and hardware for various computing tasks. Though all of these are valuable for various reasons, and helpful in the quest after AI, these approaches do not, in my opinion, concentrate on the essence of intelligence.

VIII. Reverse-engineering (kind of) the final definition of ‘Intelligence’

So then, how should an intelligent mind be conceived and conceptualized by AI researchers? There are three intrinsic elements of the human brain that drive our evolution as an intelligent species – first, interaction with the environment and stimuli; second, adapting our approach and behaviour based on our interaction with the environment; and third, functioning with insufficient resources and knowledge. Any such intelligent system must then be designed under the assumption that knowledge and resources are insufficient in some, if / but not all, aspects. Consequently, adaptation is necessary. Most current reasoning systems developed for AI fall into this category. Accordingly, pure-axiomatic systems are not intelligent at all (regardless of their efficacy in hard sciences, mathematics and pure computational fields), non-axiomatic systems are intelligent, and semi-axiomatic systems are intelligent in certain aspects.

Points (a), (b), and (c) deal with the idea of functioning with insufficient resources and knowledge as posited above; (d) deals with the ideal of adaptability as intelligence; and (e) covers the environment and stimuli.

(a) With finite capability: Being finite would mean the AI’s information processing capability (such as how fast it can run and how much it can remember) is roughly a constant at any period of time. This requirement seems trivial, as any concrete system is surely finite. However, to acknowledge the finite nature means the system should manage its own resources, rather than merely spending them. This requirement is mainly for the theoretical models, since most traditional ones seem to operate from a place of ignorance vis-a-vis resource restrictions. Think about it this way: the human brain itself knows that it has computational limitations – there are only so many things that we can deal with at the same time or think of properly without running our minds into oblivion and losing the bolts of our brain. In that sense, there is an innate selection mechanism through which we pick our ‘processes’ and manage our resources.

(b) Real-Time responsiveness: Another point that may bear some level of relationship with above-expressed idea is the notion of functioning in real-time – understanding and preparing for any new tasks of various types which may show up at any moment, rather than occurring only when the AI is idle and waiting for them. It also means that every task has a response time restriction, which may be in the form of an absolute deadline, or as a relatively expressed temporal limit, such as “ASAP” or “after x”. In general, we can consider the value of a solution to be a decreasing function of time, so even a correct solution may become worthless when produced too late.

(c) Open System: Such an ‘intelligent system’ or AGI must then also be open. This openness must be exhibited with respect to new tasks; meaning that the AI makes no restriction on their content, as long as they are expressed in an acceptable form. Every system surely has limitations in the signals its mechanisms can recognize or the languages its linguistic competence can handle, but there cannot be restriction on what it can be told or asked to do. Even if new experience conflicts with its current beliefs, or the new job is beyond its current skill set, the system still should handle them reasonably.

(d) Adaptability: The fourth element, therefore, would be the element of adaptability. In the context of AI, this term refers to the mechanism for a system to use its past experience to predict the future situations, and to use its bounded resources to meet the unbounded demands. As new experience becomes available, it will be absorbed into the system’s beliefs, so as to put its solutions and decisions on a more stable foundation. I would posit here that perhaps intelligence is nothing more than an advanced form of adaptation, which happens within the lifetime of a single being, and the changes it produces depend on the past experience. Therefore, it is different from the adaptation realized via an evolutionary process in a species, where the changes happen in an ‘experience-independent manner’, then selectively kept according to the future experience (as in evolutionary computation)11. I would also suggest, some would say rather foolishly or bravely, that adaptation in this sense must refer to the attempt rather than the impact or the result stemming from such action. Even when the behaviour is adjusted (what I am about to say may be equally true for human beings) based on past experiences, it would only yield a performance improvement if the future was similar to the past in certain relevant aspects. While we can assume such similarity (given insufficient knowledge), it can in no way be guaranteed – not even through the means of computation power far stronger than what we possess.

(e) Environment and Stimuli - I will admit that a part of the environment and stimuli has been incorporated into the first three points that I laid down at the beginning of this section. However, as part of this final element of ‘intelligence’, it would be apt to focus on how the AI itself interacts with the environment. The solution of how to interact must use “algorithm-defined” steps to form one-time processes for each problem-instance, so as to process them in a case-by-case manner rather than generalizing across the board and falling into a self-inflicted cognitive trap. Owing to the adaptiveness of the system, any internal state changes it experiences will be acyclic, and so would the environment, which would render the problem-solving processes no longer accurately repeatable, and there is no algorithm for the solving of a type of problem (which is a set of problem-instances). The design of the algorithmic steps should ideally serve as the building blocks of problem solving processes, as well as the mechanism to combine these steps at the run time for each individual problem-instance. Both tasks are independent of the application domain and the specific features of the problems in the domain.

I would, therefore, state that to me, the concept of ‘Intelligence’ is:

"The ability to adapt to your environment and generate real-time responses to open questions with insufficient knowledge and resources"Of course, everyone is open to choosing their own interpretation and definition of ‘Intelligence’. We cannot even surmise whether one day there may be such universal definition. The difficulty of this is of the same caliber as of constructing your own philosophy - what you believe in, is what your efforts yield.

IX. Some Questions to Ponder

I will admit that such a conception of intelligence leaves more unanswered and doubtful rather than solved and certain - even to my mind. But the very idea of scientific inquiry and of rational thought is to learn more to be able to question more. In fact, it is a widely accepted notion that the bounds of the unknown always grow proportionately bigger than the bounds of the known do. As we find more answers, we will pose more questions. And this perhaps is the beauty of the human mind, of our sentience that thus far no A.I. has been able to successful replicate in an unbounded, open process.

For instance, it has long been argued that a core part of the human ‘Theory of Mind’ is that we recognise that other agents do not base their decisions directly on the state of the world or on the factual circumstances as they exist in reality, but rather on an internal representation of the state of the world. This is usually framed as an understanding that other agents hold beliefs about the world: they may have knowledge that we do not; they may be ignorant of something that we know; and, most dramatically, they may believe the world to be one way, when we in fact know this to be mistaken.

An understanding of this last possibility – that others can have false beliefs – has become the most celebrated indicator of human intelligence (as much as the absolutely rational, data-driven thinkers may argue otherwise), and there has been considerable research into how much children, infants, apes, and other species carry this capability. Can we conceive of an AI / AGI that is capable of this level of understanding and intellect? To possess such intelligence would require the AGI to have the ability to separate out what it itself knows, and what the others (the other AIs, people etc.) can plausibly know, without relying on a hand-engineered, explicit observation model for the agent. What about factoring in distance-based effects of changes in the environment on the actors in such a world?

Benjamin Drukarch, Hanna A. Holland, Martin Velichkov, Jeroen J.G. Geurts, Pieter Voorn, Gerrit Glasa, Henk W. de Regt, Thinking about the nerve impulse: A critical analysis of the electricity-centered conception of nerve excitability

G Reeke, and G Edelman (1988), Real brains and artificial intelligence, Daedalus 117(1)

J. L. McClelland D. E. Rumelhart, and G. E. Hinton, The Appeals of Parallel Distributed Processing, Stanford

James Searle, Minds, Brains and Programs, The Behavioural and Brain Sciences (1980)

Alan Turing, Computing Machinery and Intelligence, Mind LIX (236): 433 - 460

Robert M French, Subcognition and the Limits of the Turing Test, Mind, Volume XCIX, Issue 393, at pp. 53-65 (1990)

Douglas Hofstadter, Gödel, Escher, Bach: an Eternal Golden Braid (2nd edition)

D Chalmers, R French, D Hofstadter, High-level perception, representation, and analogy: a critique of artificial intelligence methodology, Journal of Experimental and Theoretical Artificial Intelligence (1992)

Brenden M. Lake, Tomer D. Ullman, Joshua B. Tenenbaum and Samuel J. Gershman, Building machines that learn and think like people, Behavioral and Brain Sciences Col. 40

J H Holland, Adaptation in Natural and Artificial Systems: An Introductory Analysis With Applications to Biology, Control, and Artificial Intelligence, MIT Press (1992)